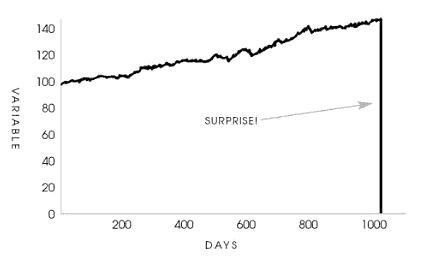

The life of a Thanksgiving turkey can teach us a lot about risk. I believe Nicholas Nassim Taleb’s turkey chart and accompanying quote (from The Black Swan) sums it up best:

Consider a turkey that is fed every day. Every single feeding will firm up the bird’s belief that is the general rule of life to be fed every day by friendly members of the human race “looking out for its best interests,” as a politician would say. On the afternoon of the Wednesday before Thanksgiving, something unexpected will happen to the turkey. It will incur a revision of belief.

The Black Swan, by Nicholas Nassim Taleb

Source: The Black Swan, by Nicholas Nassim Taleb; Wikimedia Commons

The Taleb turkey chart and story is entertaining, but also offers much wisdom about risk-management.

The past does not predict the future

In Taleb’s story, nothing in the turkey’s life had indicated what would occur on Thanksgiving Day. The past had no predictive power in forecasting the future. On Taleb’s turkey chart, the days 1 through 1,000 are completely different than day 1,001 and beyond.

Similarly, we humans often assume the future will be similar to the past.

- We believe that stability will continue. For instance, who predicted that a suicide in Tunisia would lead to the Arab Spring and the downfall of decades-long dictatorships in Egypt and Libya?

- We believe that good times will continue. In 2019, who predicted that someone eating a bat or a lab leak would lead to a global pandemic that would reshape our society and economy for years?

- We believe that bad times will continue. I find this assumption less common (because humans are generally optimistic, I think), but why do we call the modernization of Singapore or Oman a miracle? Perhaps because we believe it is improbable to go from mud and bricks to steel and glass in such a short-time.

In addition to the above, Taleb notes that investors often layer on and are deluded by confirmation bias. Many investors mistake their results for skill rather than luck not realizing that “a high tide lifts all boats.” In bull markets and bubbles, terrible decisions are often rewarded handsomely as hot assets soar in price. Investors double down on bad decisions until something changes, the tide goes out, and (in Buffett’s words) we find out who has been swimming naked. Unfortunately, in every market cycle some investors own assets that look like the Taleb turkey chart.

As Howard Mark’s says,”…trees don’t grow to the sky, and few things go to zero.” Understanding that the today’s conditions are likely to change is the key to not being a turkey. This is why investor protection rules have resulted in this disclaimer on nearly every piece of investment marketing : “Past performance is no guarantee of future results.”

Despite what we believe and despite how strong we believe it, the future is unknown.

Risk is difficult to model

Risks are difficult to model for many reasons, but one reason is that rare events are rare! We do not have sufficient data to estimate their probability or impact. Taleb’s turkey had never experienced what was about to happen.

We cannot predict earthquakes or their magnitudes. Nobody could have predicted what would happen if a strong earthquake occurred in the Pacific Ocean, generated an enormous tsunami, which destroyed a nuclear power plant (as well as its backup safety features), or what impact that would have on energy policy in Europe and geopolitical issues between the EU and Russia. I don’t believe anyone was even aware of these risks before the Fukushima earthquake/tsunami, much less modeled it. The events are rare and the sequential scenarios too numerous and complex to model.

Our record is not much better even when we’re aware of the risk. For instance, there have been many pandemics throughout history, but we were not well prepared for covid. Our leaders did not appreciate the scientific and/or behavioral nuances of pandemic planning. Furthermore, nothing like covid has ever occurred in such a globalized world. Previous plagues spread across cities and continents over months, years, and decades. Covid spread around the globe within weeks. The 2020 pandemic was unlike any past pandemic.

Ignorance is bliss

Since we do not know what risks exist and/or how probable they are, we often ignore the risks. We may think they don’t exist or are too improbable to worry about. That was certainly the case with covid. I recall debating with a family member who said the covid pandemic was abnormally rare even though pandemics occur with some regularity. It is just that there are rarely mulitple pandemics in single generation, so we forget.

There is also the issue of human memory and recency bias. There have not been any major airborne pandemics in the US within a generation or two and so we took the risk less seriously. We believe that we are smarter, more advanced, with better healthcare than past generations. Yet, we were still caught flat-footed during covid.

Information asymmetry

As Taleb notes in his book, the turkey’s surprise is not a surprise to the butcher. This is because the turkey does not have all of the information. If the turkey had a copy of Taleb’s chart for other turkeys, it might have known.

Unfortunately, we humans do not have complete information either and we rarely acknowledge it. In his famous book “Thinking, Fast and Slow,” Daniel Kahneman discusses his abbreviation WYSIATI or “what you see is all there is.” The concept of WYSIATI describes our tendency to create narratives based on the information that we have rather than admitting that there is a lot of information that we do not have.

What we see is not all that there is and our observations lead us to mistake the odds.

Note: Narratives can lead us astray in many areas of our life, even our giving! Here’s an example from the holiday season: Is Operation Christmas Child good or bad?

We think we know black swans to expect, but we miss the real ones

The bottom line is that we consider, anticipate, and worry about risks that we can imagine. Yet we often miss the risks that end up occurring. As Josh Wolfe says, “Failure comes from an a failure to imagine failure.” We cannot always imagine black swans, but we should try to avoid the common mistakes that Taleb’s turkey made.